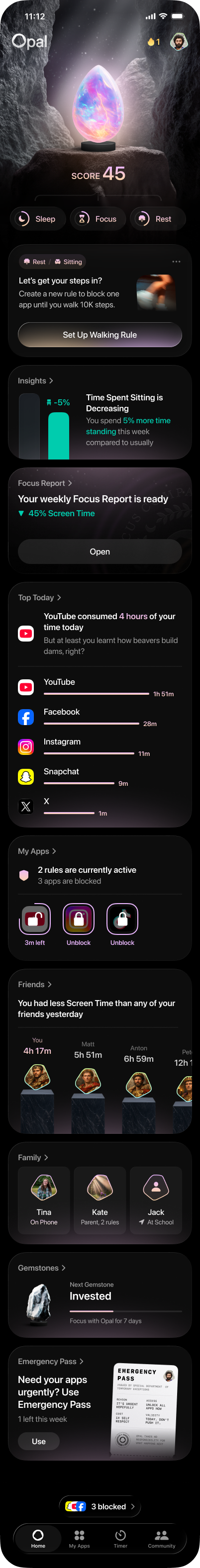

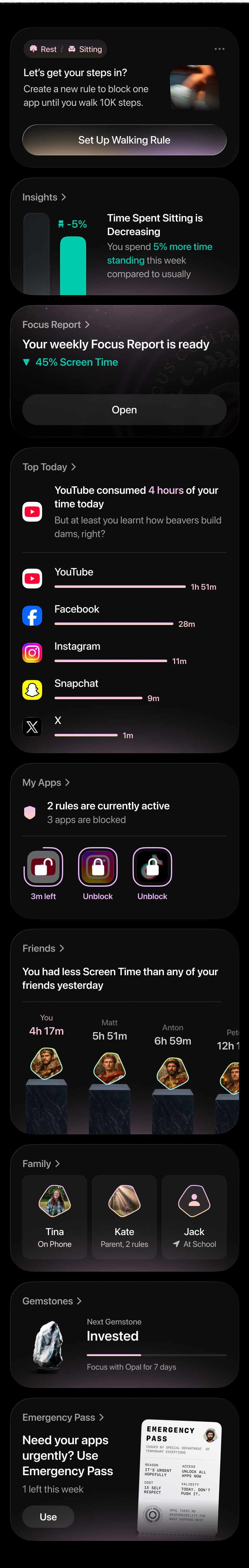

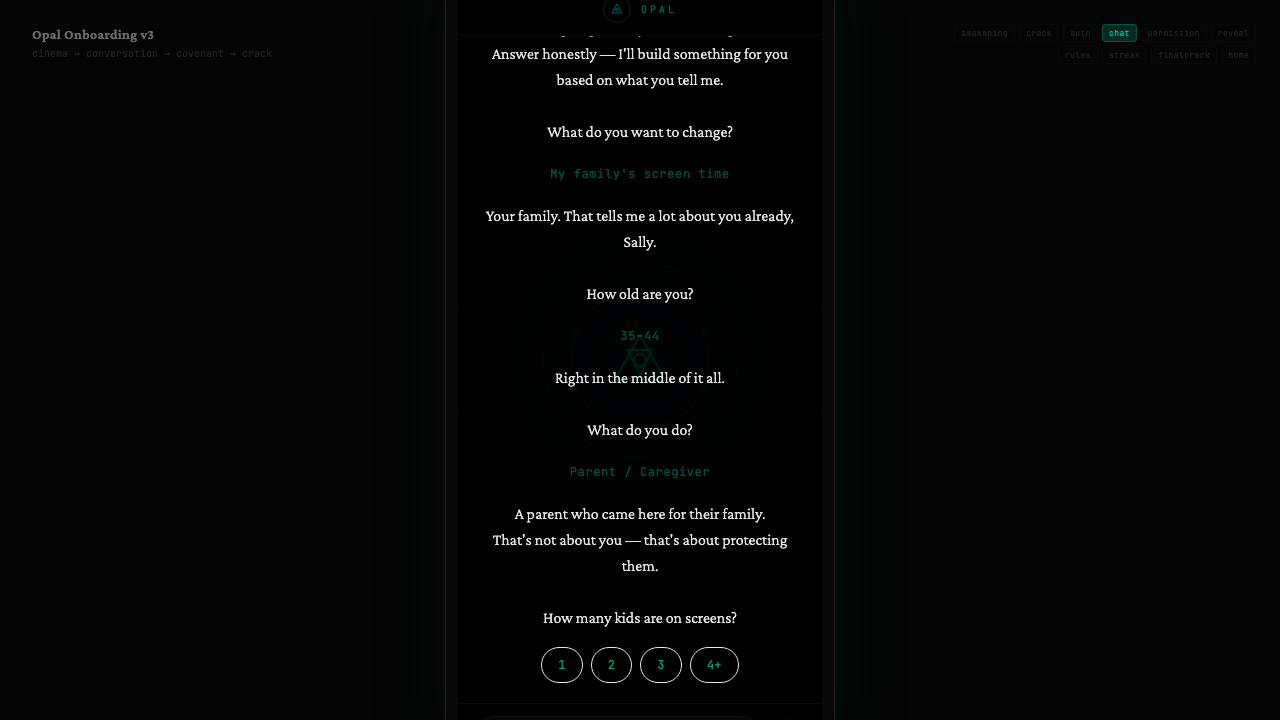

Sally stopped being generic and started getting personal.

We recovered the whole project, verified the live deployment, and shifted the design goal from "14-day theater" into Sally's real screen. Structure simplified to one suggestion card + 3–4 supporting widgets.

What we did

- Recovered the working local project, Figma anchors, and live deployment path.

- Verified the current live page and re-centered around the real Sally persona.

- Shifted the product goal from "story about Sally" to "Sally is the user inside the UI."

Key decisions

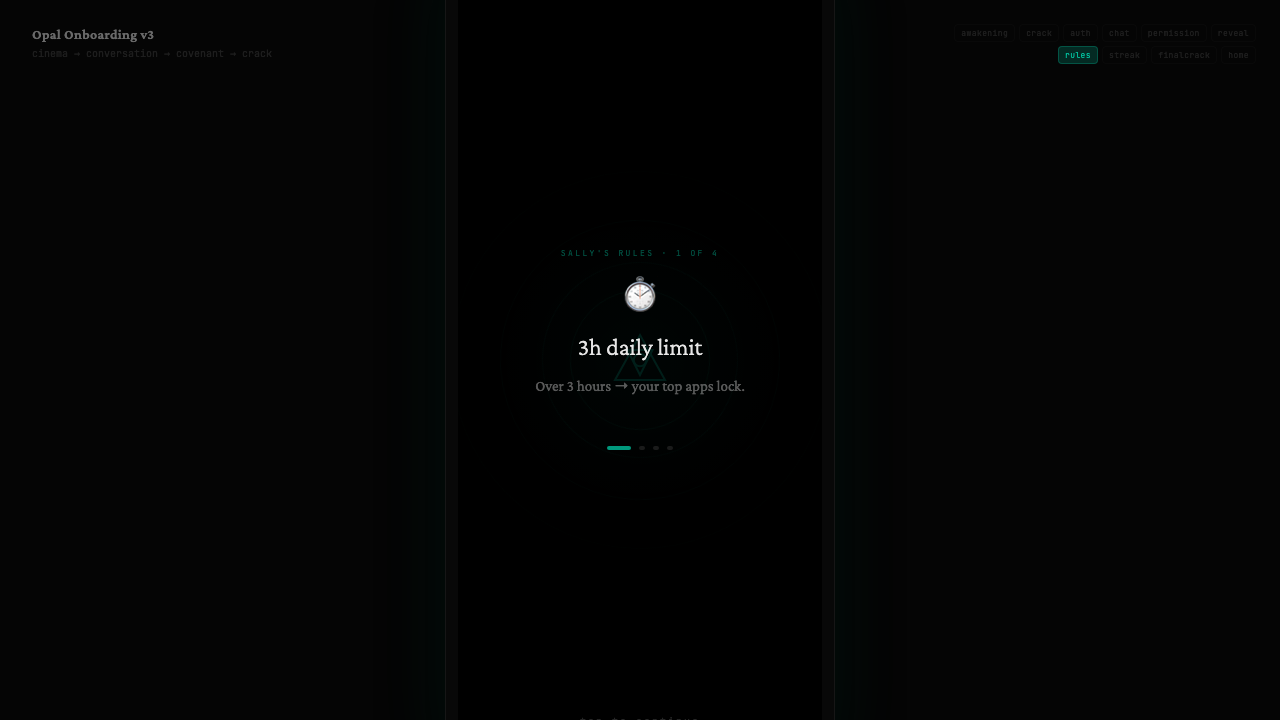

- Lead with sleep, family control, rules/consequences — not abstract wellness language.

- One suggestion card + 3–4 widgets instead of a dense feed.

- Phone mockup should feel owned by Sally, not a generic Opal demo shell.

A stronger soul — and a clearer usability problem.

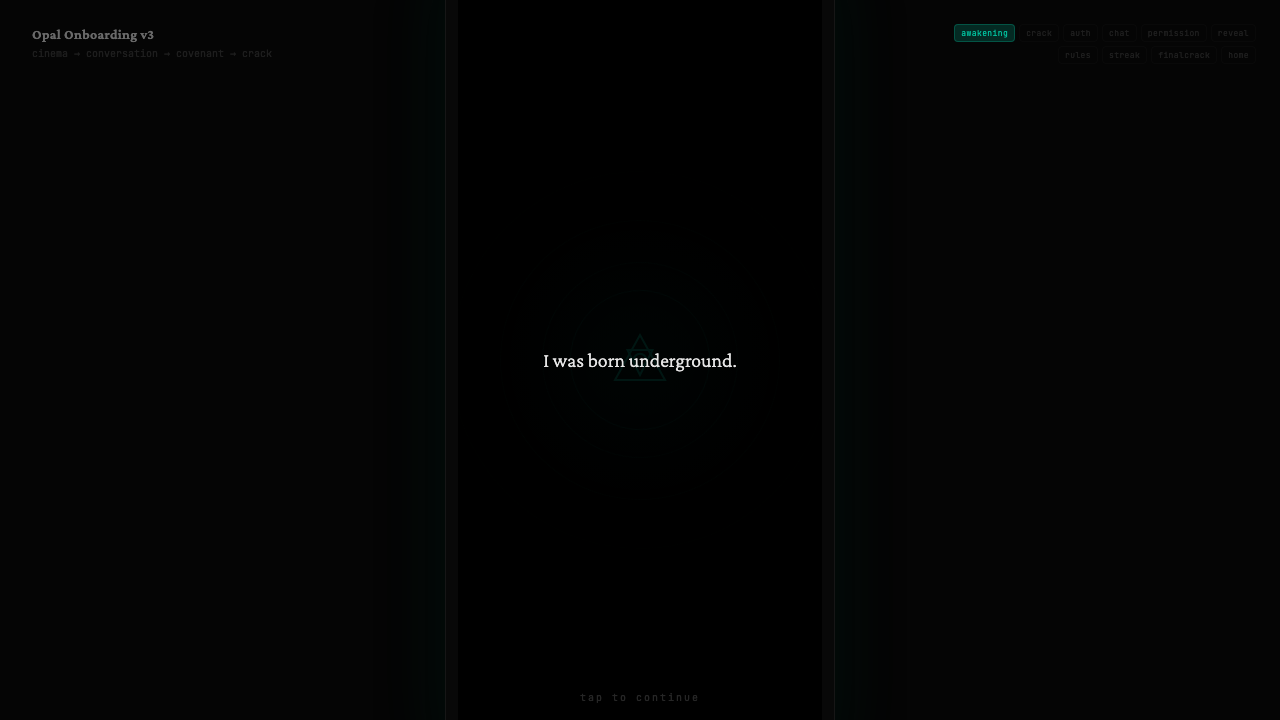

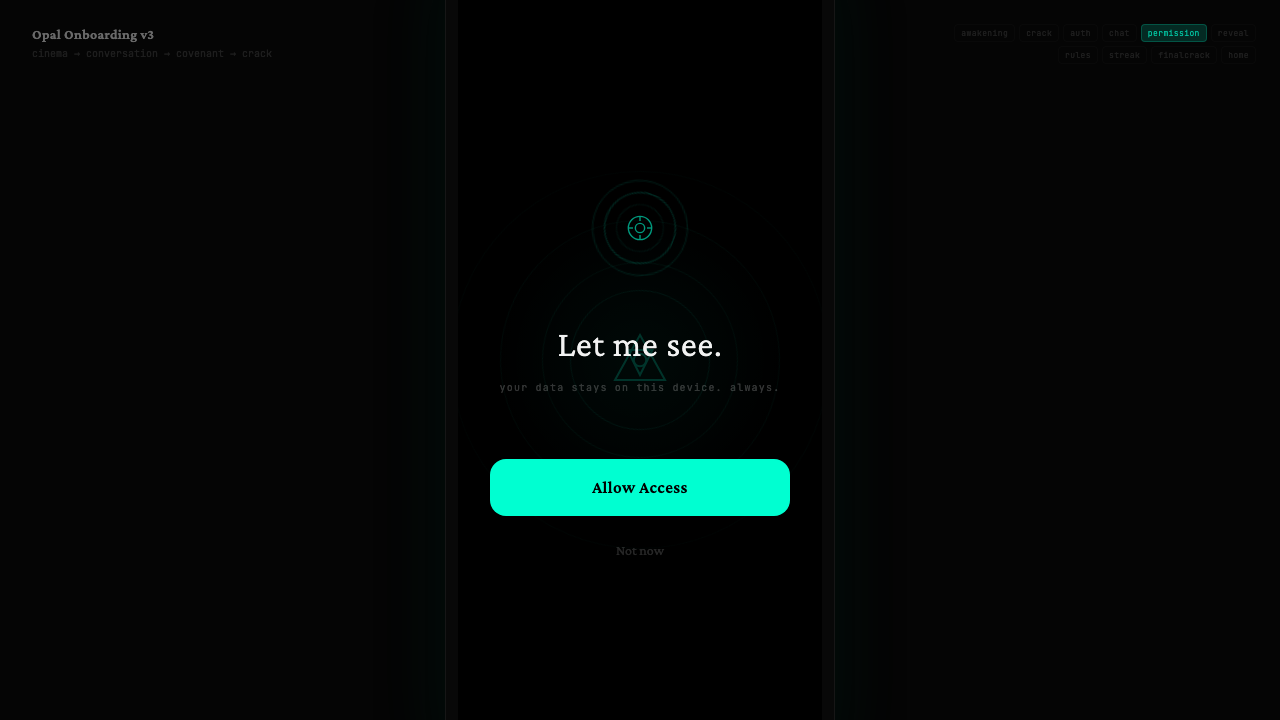

We audited the chat-onboarding prototype, pushed it toward an ancient-tech / Sheikah direction, then pressure-tested it through a Sally walkthrough. The aesthetic got more distinctive; the practical gaps also got easier to name.

What landed

- Chat is good for compressing boring intake steps.

- Full-screen beats work better for permission asks, reveals, and the "pact" moment.

- The oracular / artifact framing makes Opal feel singular vs. standard SaaS.

What broke through

- Mythology is memorable, but practical users need product value earlier.

- Family / kids device setup flow is the biggest missing piece.

- ~17 screens in one version — real drop-off risk.

Real product drift.

Cross-platform Figma audit showed iOS materially further ahead in onboarding, settings, and core surfaces.

Largest gaps

- Onboarding and Autofocus significantly more mature on iOS.

- Settings, Rules, Apps, Profile, Stats, Timer showed structural mismatch.

- Some differences were IA/product differences, not design tokens.

Why it matters

- Harder to talk about "the product" as one coherent system.

- Autofocus is a clear parity watchpoint.

The model should actually see the page.

Autofocus simplified into screenshot-driven inference. Vision-based is the real unlock.

What's running

- Worker: opal-focus-v2-worker.anton-6cb.workers.dev

- Model: x-ai/grok-4.20-beta via OpenRouter

- Pipeline: blur, meme detection, allowlist, pre-viewport, per-platform rules

Direction

- Keep distraction-removal as foundation.

- Make Autofocus LLM-native and screenshot-aware.

- Vision-based inference = the real unlock.

Demo-able. Not yet daily-drivable.

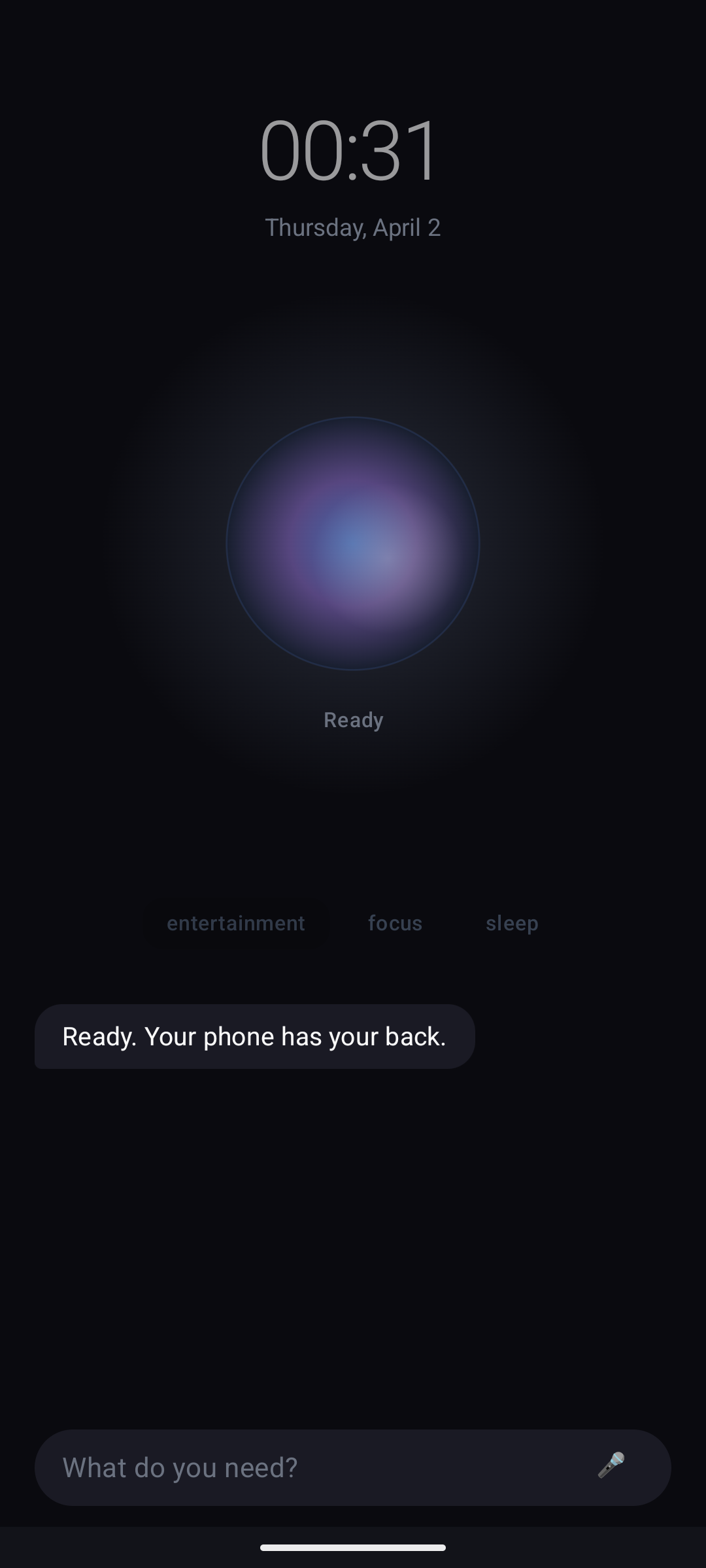

The prototype runs in the Android emulator with four modes (Utility, Entertainment, Focus, Sleep), an agent chat wired to OpenRouter with vision support, and a glowing gem orb UI. But overlay loops and incomplete image wiring block real use.

What advanced

- Builds, installs, launches in Android emulator.

- Four modes: Utility, Entertainment, Focus, Sleep.

- Agent chat wired to OpenRouter with vision support.

- Image attach button added to agent chat UI.

Current rough edges

- Overlay persistence and app reopen loops are blockers.

- Image sending: backend exists, frontend picker missing.

- Java 17 setup was needed for builds.

- Demo-able but not frictionless for daily use.

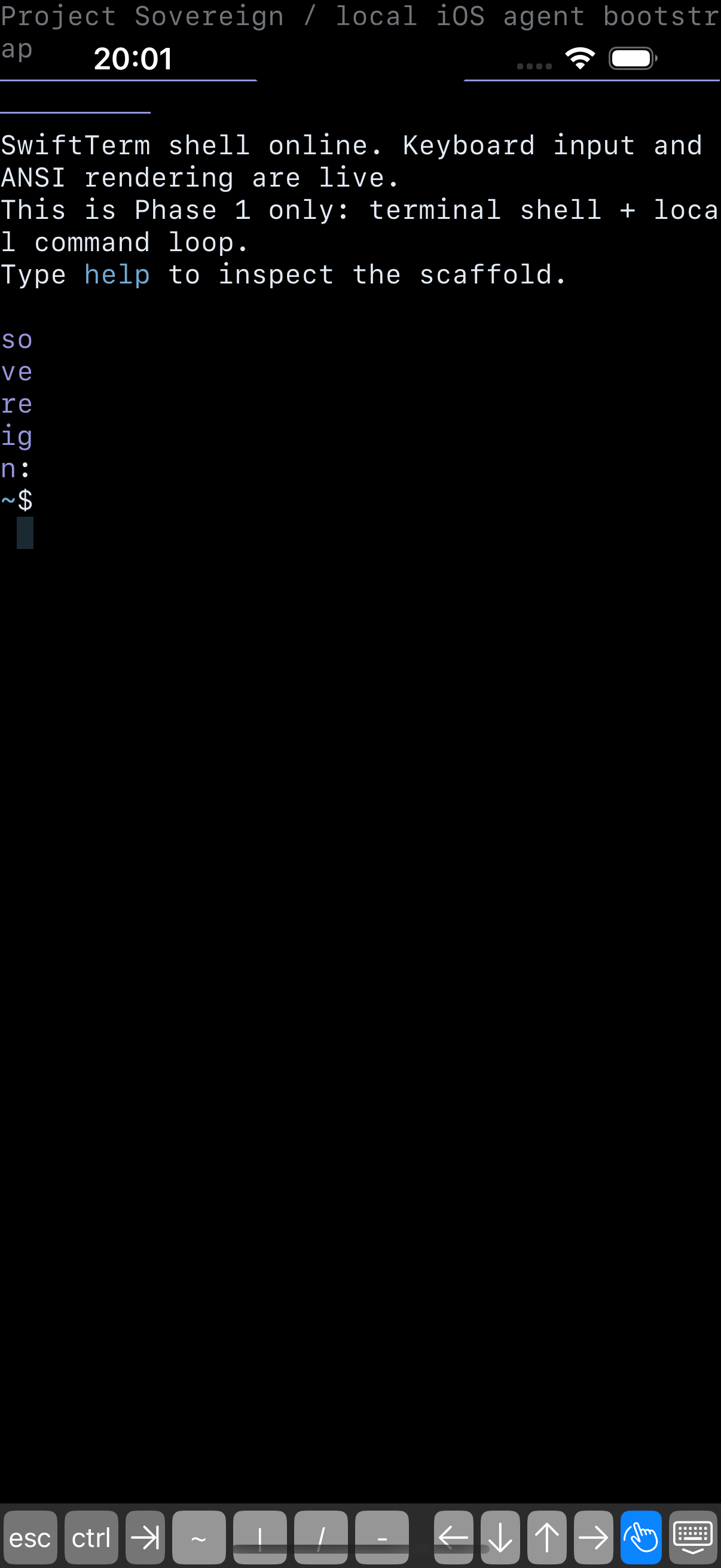

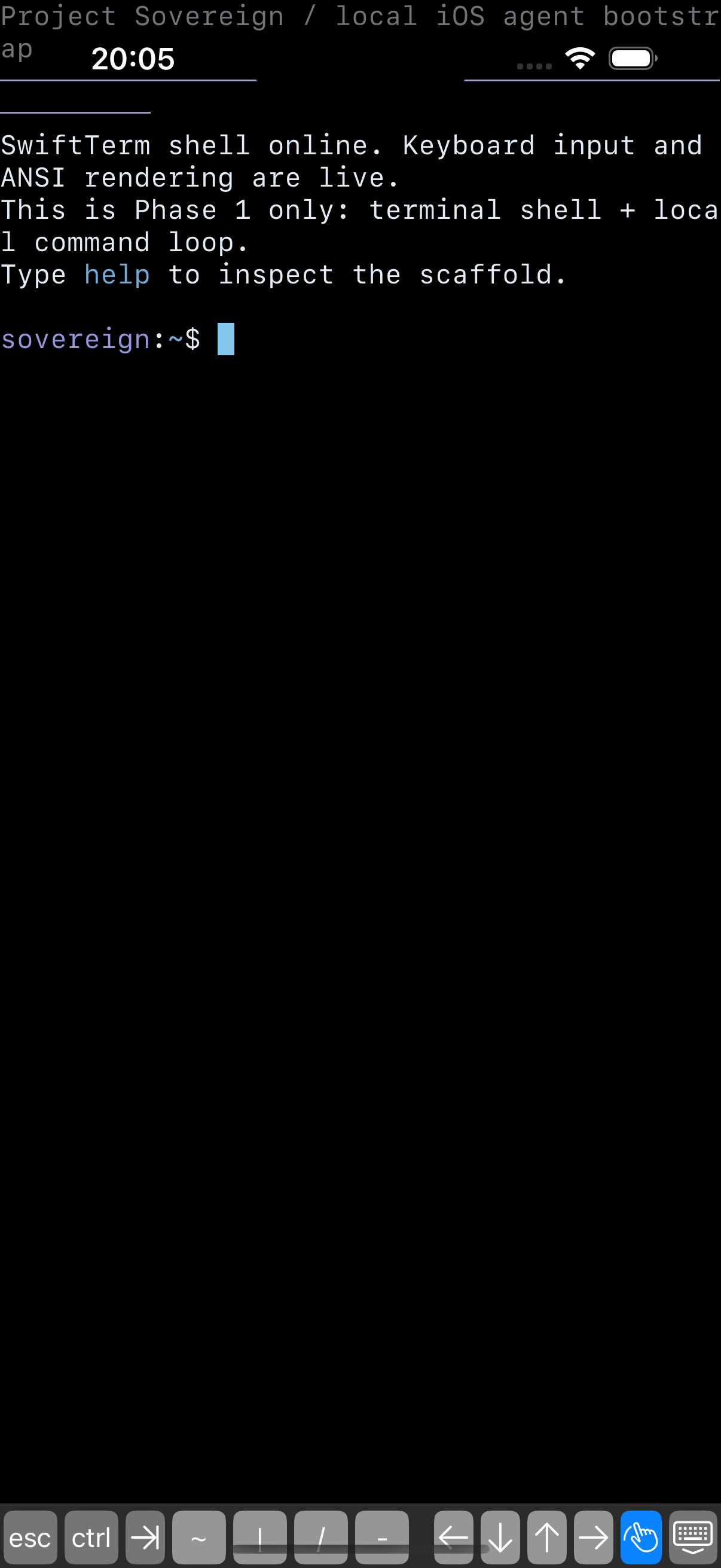

Embedded agent. No server required.

Python 3.13 runtime bundled inside a native Swift app via PythonAppleSupport xcframework. SwiftTerm provides the terminal UI. Hermes agent source ships in the app bundle. The bridge mediates between Swift and Python.

Architecture

- Embedded Python 3.13 via Python.xcframework

- SwiftTerm for terminal UI + custom keyboard bar

- Hermes agent bundled at Resources/EmbeddedPython/app/hermes-agent/

- Bridge: sovereign_hermes_bridge.py

This week

- Task 3: Designed sandboxed Hermes home/workspace seeding. SovereignBootstrap.swift spec done.

- Task 4: vendor-hermes-minimal-deps.sh wires openai, anthropic, pydantic, rich, httpx. Host-only validation.

- Blockers identified: pydantic_core, jiter, pyyaml, markupsafe, charset_normalizer — macOS .so files that can't ship on iOS.

- App builds and runs in iOS simulator.

Unresolved

site-packages-ios-sim/andsite-packages-ios-device/are placeholder .keep files- No iOS-compiled Python packages yet — host validation only

- Bootstrap wiring not yet applied to ProjectSovereignApp.swift

- Task 3 designed but not implemented in code

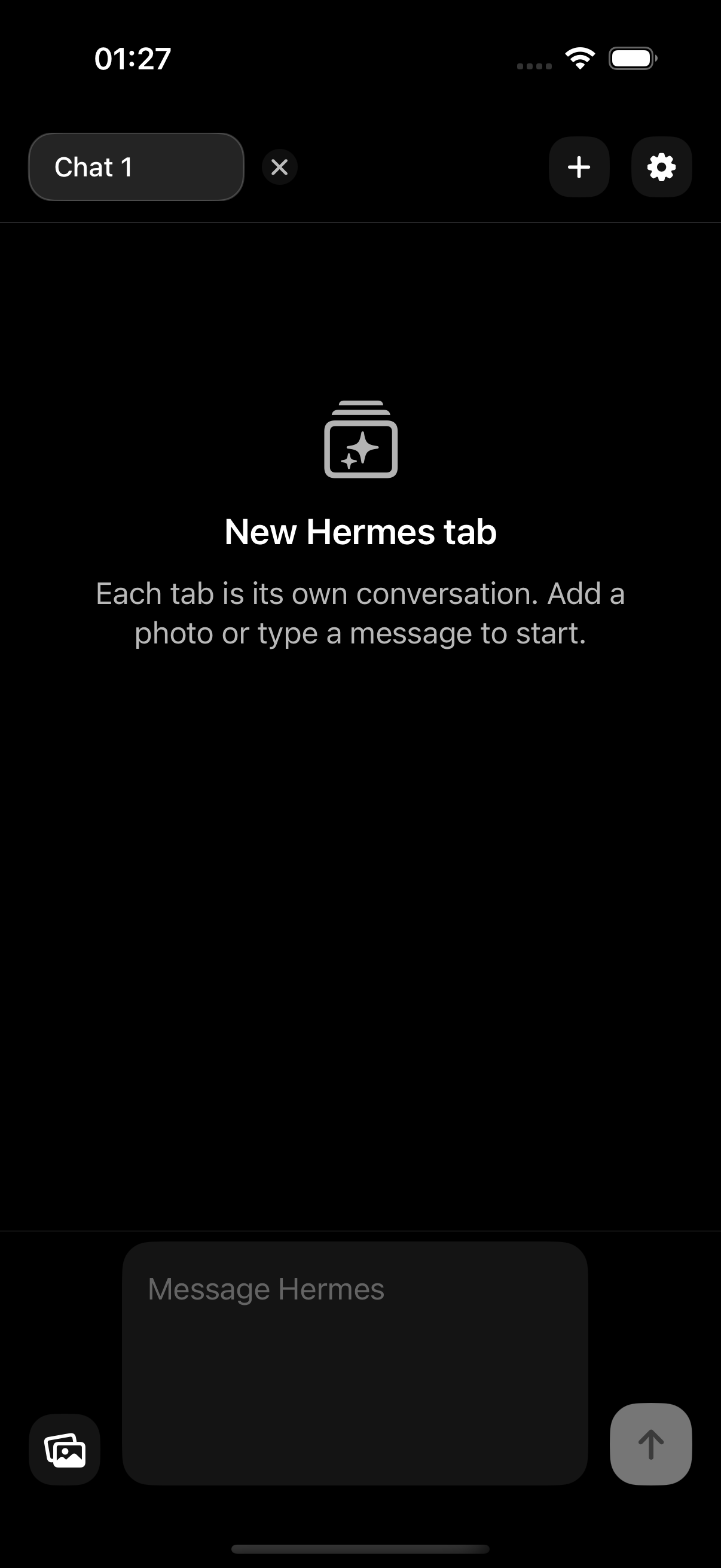

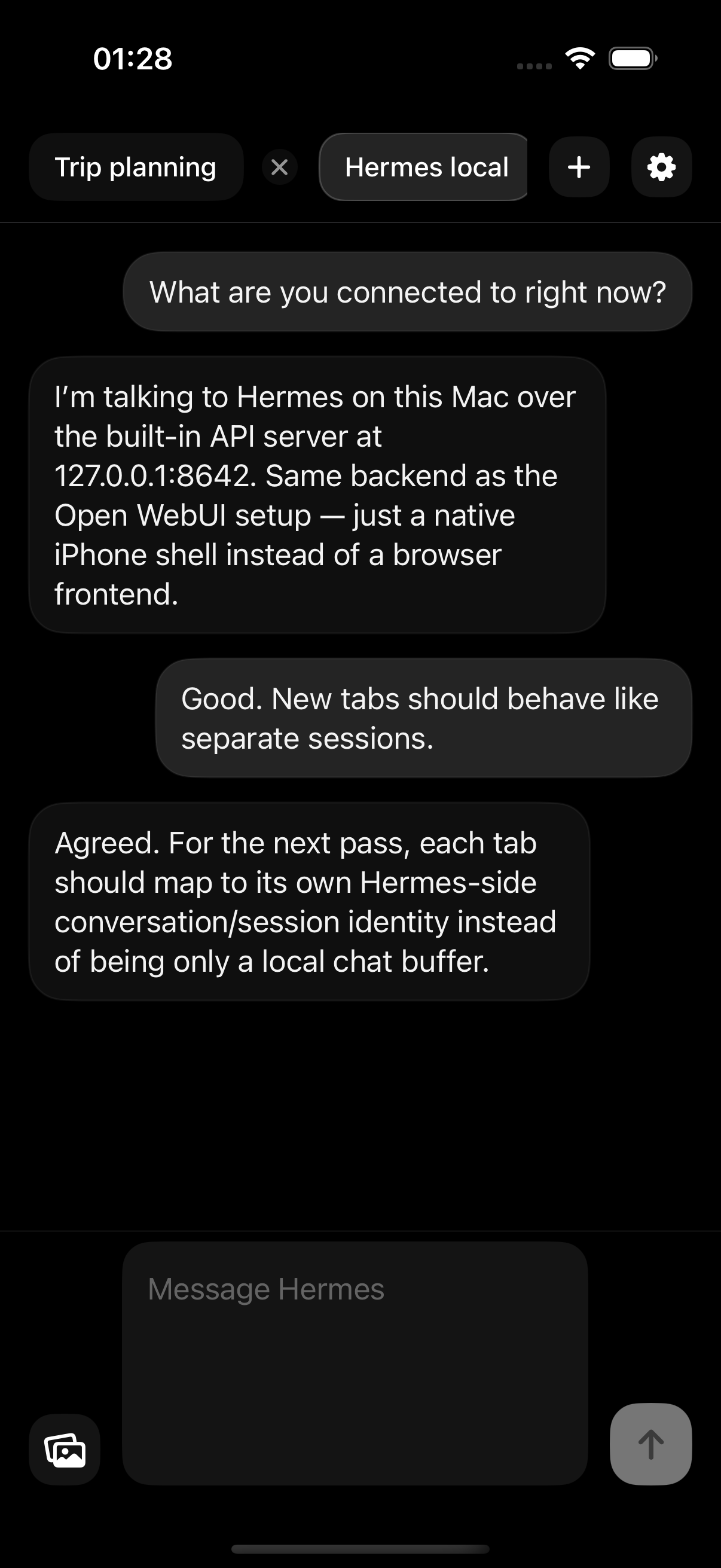

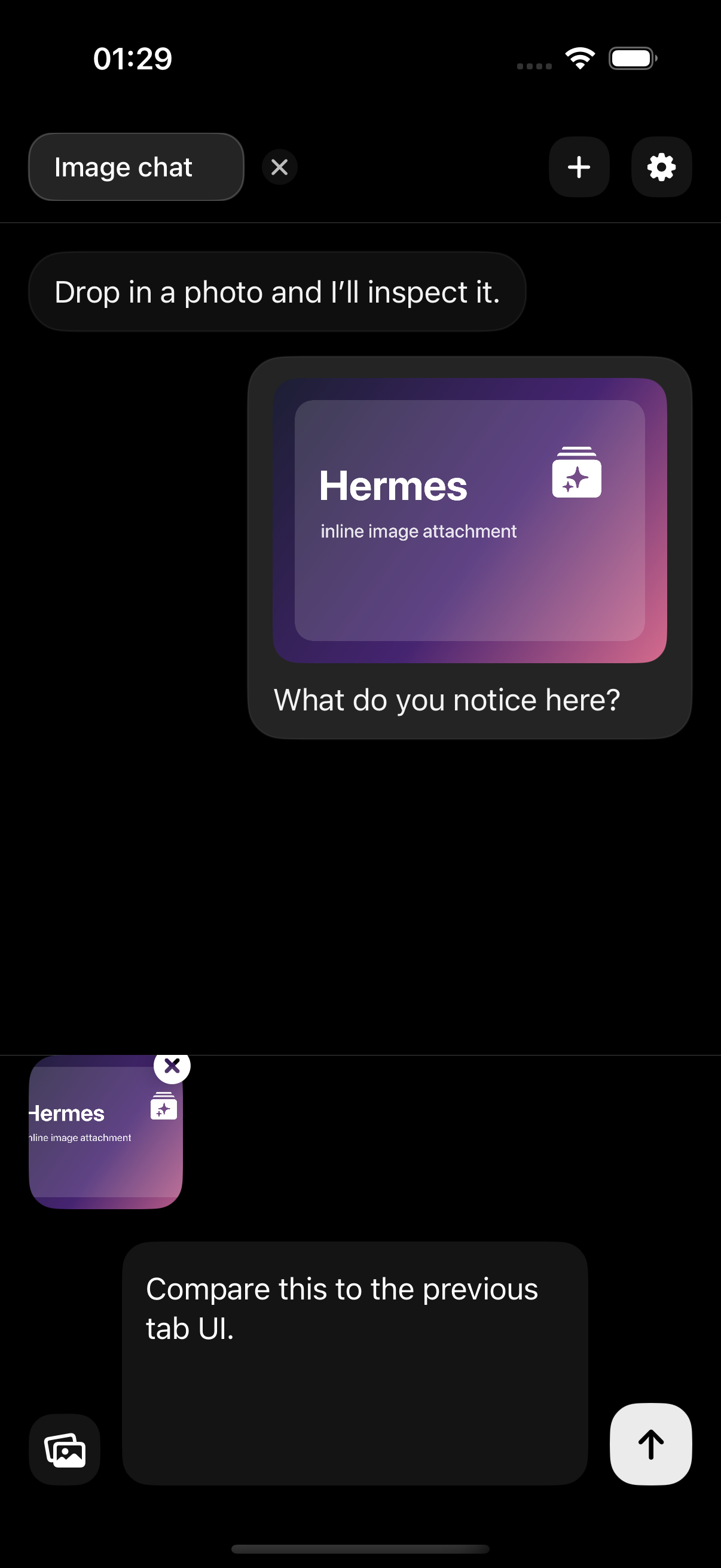

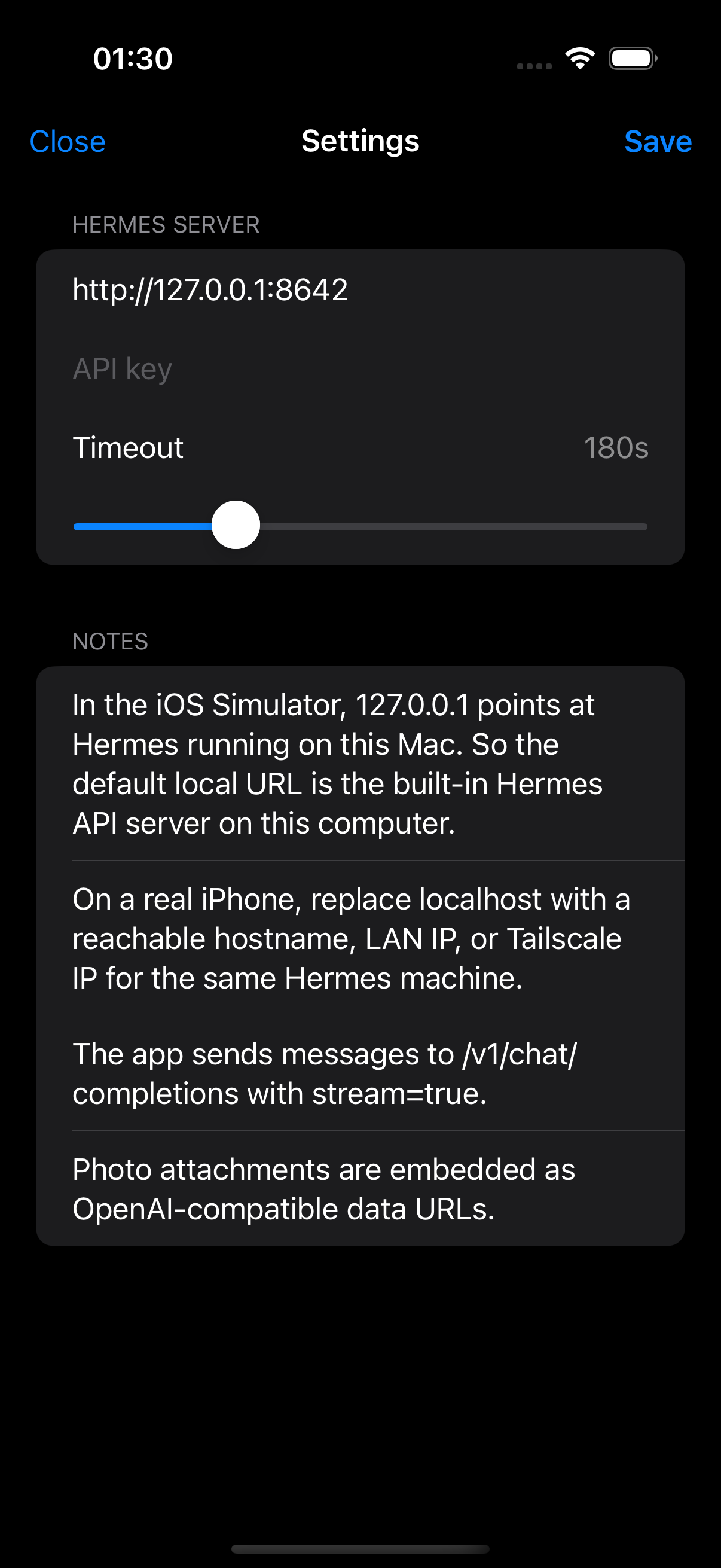

Tab-per-conversation chat client for Hermes.

Native SwiftUI app. Each tab is a conversation. Streams responses from the public Hermes proxy. Like Safari, but each window talks to the agent.

Architecture

- Native SwiftUI, iOS 17+

- POST /v1/chat/completions with SSE streaming

- Endpoint: opal-agent.decemberclaw.com

- Local persistence: Application Support/HermesTabs/state.json

Known issues

- Jank: Draft text bound to @Published store on every keystroke. Fix: local @State in composer view.

- Composer oversized: TextEditor → TextField with .lineLimit(1...4)

- Tab close: Detached sibling button, not Safari-like integrated X

- Tabs = local history only. Path: migrate to /v1/responses with conversation param

- Image upload blocked — not supported through Hermes API yet